|

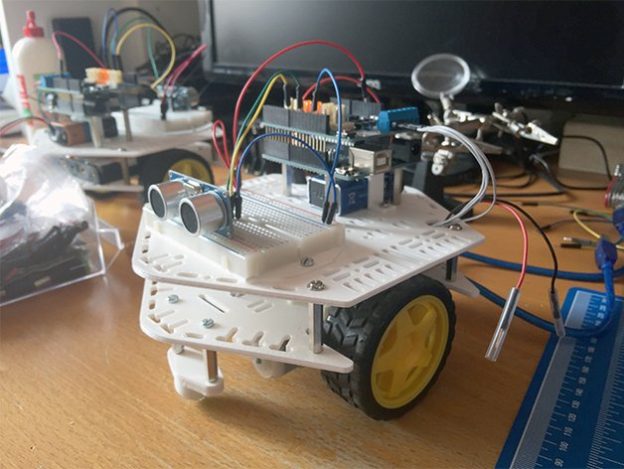

Connect the nRF52840-DK to your computer through the micro USB cable.You should end up with 2 files named 03app_dotbot.hex and 03app_dotbot_gateway.hex. hex files) of the DotBot-firmware and of the Gateway firmware from The following instructions will guide through running the default remote control example in your DotBot board. Loading this app onto the DotBot board will make the motors of the DotBot remote controllable by a nRF52840-DK with the gateway-firmware firmware, as it can be seen in the above video. This repository contains the source code for the DotBot's firmware. Rogerbot and Dotbot blocked by WP Engine hosting.DotBot: easy-to-use micro-robot for education and research purposes A spider is a computer program that follows certain links on the web and gathers information as it goes. How to find? In log files (/var/log/nginx or /var/log/apache) Bots User Agent examples # Google We recently discovered a problem with our MOZ account which led to checking our hosts settings. " "Mozilla/4.0 (compatible MSIE 7.0 Windows NT 5.1) Mozilla/5.0 (compatible Baiduspider/2.0 +) MOZ DOTBOT HOW TO # Number of seconds to wait between subsequent visits Robots Exclusion Protocol # public/robots.txt # nosnippet, noodp, notranslate, noimageindex Recomentations Meta tags # No index for all Mozilla/5.0 (compatible DotBot/1.1, Exabot MOZ DOTBOT WINDOWS Good bots read robots.txt and observe the recommendations Feed the botįeed him.

& block IP! Tools Nginx # /etc/nginx/nfĬ Rack::Attack # unless ? Moz Pro Your All-In-One Suite of SEO Tools The essential SEO toolset: keyword research, link building, site audits, page optimization, rank tracking, reporting, and more. Whitelist # Always allow requests from localhost MOZ DOTBOT PRO User-agent: AhrefsBot crawl-delay: 10 Moz robot User-agent: rogerbot Crawl-delay: 10 User-agent: DotBot Crawl-delay: 10 SEMRush User-agent: SemrushBot. While Mozs crawler DotBot clearly enjoys the closest robots.txt profile to Google among the three major link indexes, theres still a lot of work to be. Rack::Attack.whitelist('Allow from localhost') do |request| Presently, there are three source IPv4 classifications defined in our GreyNoise Query Language ( GNQL ): benign, malicious, and unknown. Cybersecurity folks tend to focus quite a bit on the malicious ones since they may represent a clear and present danger to operations. # Requests are allowed if the return value is truthyĬlass Rack::Attack::Request limit_proc, :period => period_proc) do |req| However, there are many benign sources of activity that are always on the. Tracks # Track requests from a special user agent. Rack::ack("special_agent", limit: 6, period: 60.seconds) do |req| # triggers the notification only when the limit is reached. # Track it using ActiveSupport::NotificationĪctiveSupport::Notifications.subscribe("rack.attack") do |name, start, finish, request_id, req| Dotbot is probably tied up with Moz in some way, so your assumptions might turn out right. 12:18 am on (gmt 0) Is dotbot still doing its stuff Quick look at raw logs says it hasnt been around since August.

Rack::Attack.blacklisted_response = lambda do |_env| If req.env = "special_agent" & req.env = :track Even for a site as small as mine, thats a long gap. My trap # app/middleware/antibot_middleware.rb Rack::Attack.throttled_response = lambda do |env| Rack::Attack returns 403 for blacklists by default # Using 503 because it may make attacker think that they have successfully Dotbot is Mozs web crawler, it gathers web data for the Moz Link Index. dotBot Robotics is a spin-off of the GSCAR group in the Federal University of Rio de Janeiro. How bot can avoid these methods? Read file robots.txt file, send requests from different end-points in TOR, change User Agent, change session, be polite and useful.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed